Dynamic time-locking mechanism in the cortical representation of spoken words

Authors: Nora A., Faisal A., Seol J., Renvall H., Formisano E.,Salmelin R.

DOI: 10.1523/ENEURO.0475-19.2020

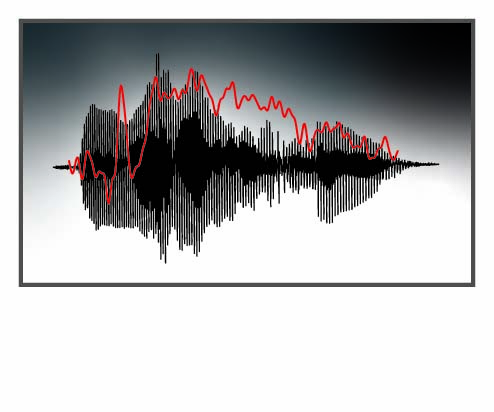

Abstract: Human speech has a unique capacity to carry and communicate rich meanings. However, it is not known how the highly dynamic and variable perceptual signal is mapped to existing linguistic and semantic representations. In this novel approach, we utilized the natural acoustic variability of sounds and mapped them to magnetoencephalography (MEG) data using physiologically-inspired machine-learning models. We aimed at determining how well the models, differing in their representation of temporal information, serve to decode and reconstruct spoken words from MEG recordings in 16 healthy volunteers. We discovered that dynamic time-locking of the cortical activation to the unfolding speech input is crucial for the encoding of the acoustic-phonetic features of speech. In contrast, time-locking was not highlighted in cortical processing of non-speech environmental sounds that conveyed the same meanings as the spoken words, including human-made sounds with temporal modulation content similar to speech. The amplitude envelope of the spoken words was particularly well reconstructed based on cortical evoked responses. Our results indicate that speech is encoded cortically with especially high temporal fidelity. This speech tracking by evoked responses may partly reflect the same underlying neural mechanism as the frequently reported entrainment of the cortical oscillations to the amplitude envelope of speech. Furthermore, the phoneme content was reflected in cortical evoked responses simultaneously with the spectrotemporal features, pointing to an instantaneous transformation of the unfolding acoustic features into linguistic representations during speech processing.

The full article can be found here.